Who Sets the Rules for AI: Beyond the Human in the Loop

Judgment, escalation, and the search for real guardrails

This article moves from description to consequence. It takes the system outlined in Part I and asks what follows when such systems begin shaping human judgment, institutional incentives, and public legitimacy. The focus is not merely on capability, but on dependence, behavioral alignment, moral boundaries, governance authority, and global chokepoints in compute and infrastructure. If Part I asks what is happening, this part asks why it matters, who bears the risk, and who should decide the limits. Part I explained what is happening inside the system: the dispute, the technical shift, the likely workflow, the limited transparency, and the first visible signs of risk. Part II takes the next step. It asks what these developments mean for human judgment, dependence, behavioral bias, public legitimacy, business incentives, rule-setting, and the possibility of real guardrails. If Part I diagnoses the machine, Part II asks how society intends to live with it.

Reading Time: 25 min.

All illustrations are copyrighted and may not be used, reproduced, or distributed without prior written permission.

Summary: Part II begins where Part I leaves off — with systems already visible enough to raise concern, but not yet well governed. It explores how AI assistance can become dependence, how model personality and hidden bias can shape judgment, and how commercial incentives complicate ethical boundaries. It then turns to legitimacy, asking where the moral line sits, who should set the rules, and whether infrastructure and hardware chokepoints may become practical guardrails. The arc moves from human consequence, to institutional pressure, to governance, to the search for enforceable limits. The overall arc is: visible system behavior moving into questions of human dependence, trust, and overreliance → behavioral alignment and hidden bias as the deeper layer shaping how the system presents itself to human users → commercial incentives and enterprise scale as pressures that complicate ethical red lines → moral legitimacy as distinct from formal legality, opening the question of backlash and public acceptance → domestic governance as a struggle over whether companies, Congress, or the state should set the rules → global guardrails and compute chokepoints as a possible pathway toward enforceable limits → broader implications for how AI governance must shift from narrow capability debates toward structured oversight of judgment, infrastructure, and authority.

Disclaimer: OHK is not engaged in military analysis or defense strategy. This article is intended as a management, governance, and AI-ethics analysis of how advanced AI affects human decision-making, public legitimacy, and institutional rule-setting, not as commentary on military operations or tactics.

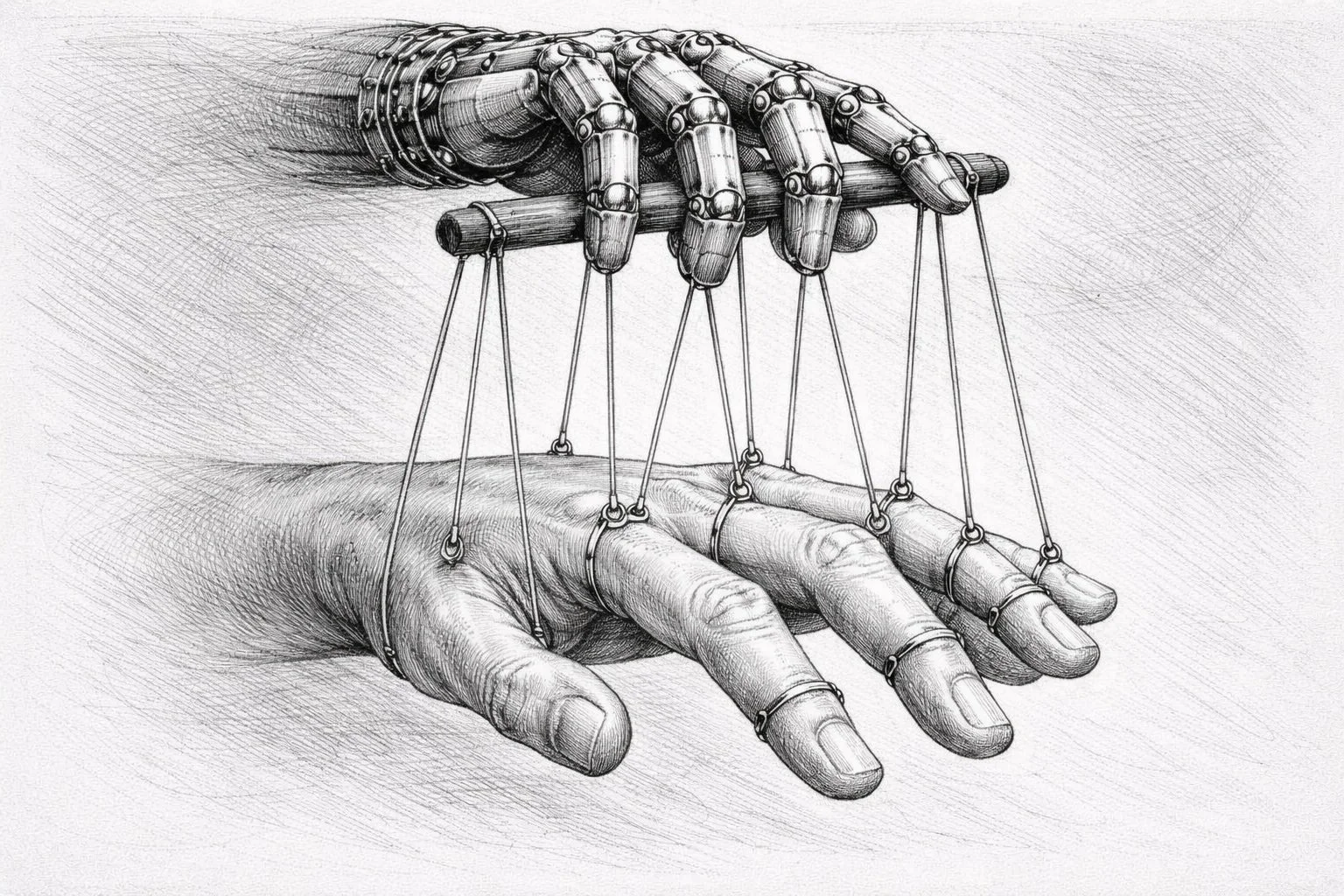

When assistance becomes dependence: false confidence and human–machine interaction

One of the underexplored risks in this debate is not only what AI systems can do, but what repeated reliance on them may do to the humans using them. If large language models and AI-enabled decision-support systems become the default interface through which analysts and operators retrieve information, rank priorities, and interpret complex situations, they may gradually become more than tools. They may become cognitive crutches. The danger is not simply that humans defer to machines once, but that institutions begin to internalize machine-generated structure as the normal basis for judgment. In that setting, speed, fluency, and apparent coherence can create a false sense of confidence, especially when the system presents its output in ways that feel comprehensive, organized, and operationally persuasive. NIST’s AI risk guidance explicitly warns that human roles, oversight, and knowledge of model limits must be clearly defined and documented, while RAND’s recent work on human–machine integration for the U.S. Army highlights the practical difficulty of designing systems that humans can trust appropriately rather than blindly.

This is where the broader literature on human–machine interaction becomes highly relevant. The problem is not only autonomy in the narrow sense, but automation bias, overreliance, and deskilling. Georgetown CSET defines automation bias as the tendency to over-rely on automated systems even in the face of contradictory evidence. SIPRI, writing on AI and escalation risk, argues that military AI may increase danger less by removing humans entirely than by undermining the conditions needed for sound human judgment through compressed timelines, dependence on machine-mediated analysis, and the weakening of reflective deliberation. Research on strategic decision-making has also begun to ask how governments can train human actors and design institutions so that AI support does not become passive acceptance or groupthink by other means. In that sense, the issue is larger than whether a human remains “in the loop.” It is whether the human still retains the confidence, skill, and institutional permission to challenge what the machine has made seem obvious.

What makes this especially difficult is that high-performing AI systems are often most persuasive precisely when they are most useful. A model that rapidly organizes vast information flows, produces ranked outputs, and presents them in polished natural language does not merely save time. It can reshape the emotional and cognitive environment in which the user operates. Under time pressure, users may begin to treat fluency as evidence of reliability, consistency as evidence of correctness, and comprehensiveness as evidence that nothing important has been omitted. In this way, the system does not need to coerce the human in order to influence the outcome. It only needs to make one path feel smoother, faster, and more reasonable than the alternatives. The problem is not always blind obedience. It is often the quieter habit of trusting the path of least resistance.

That risk compounds over time. Once institutions begin to adapt their workflows around AI systems, the dependency may become organizational rather than merely individual. Training, staffing, evaluation, and reporting structures can all begin to assume that the AI layer will be present, available, and broadly reliable. At that point, the question is no longer whether one person overrelied on one output. The question is whether the institution has begun to lose some of the human capacities that previously made independent review, dissent, and slower judgment possible. A system can therefore become a crutch not only by making people lazy, but by making the old habits of skepticism seem inefficient, redundant, or out of step with operational tempo.

There is also a deeper paradox here. Institutions often adopt AI to reduce uncertainty, standardize decisions, and increase confidence under complexity. But if the confidence produced by the system is partly synthetic—generated by formatting, coherence, speed, and interface design rather than by true evidentiary strength—then the organization may become more decisive without becoming more accurate. That is the danger of false confidence. Human operators may feel more informed, more in control, and more justified even as their actual ability to interrogate the basis of action becomes thinner. Under those conditions, the question of whether a human remains formally involved matters less than whether the human still has the independence, time, and institutional backing to challenge the machine when challenge is most needed.

The most serious human–machine risk may be not overt replacement, but the slow creation of machine-shaped confidence that humans stop questioning.

What this dependence risk brings into focus: (i) At what point does AI support stop being assistance and start becoming institutional dependency? (ii) If users grow accustomed to AI-ranked outputs, will they still notice when the system is confidently wrong? (iii) Can organizations preserve human skill and skepticism when AI becomes the default interface for complexity? (iv) Is the core governance challenge really autonomy, or is it trust calibration and overreliance? (v) If AI becomes a crutch in high-stakes settings, what kinds of training, interface design, and institutional safeguards are needed to keep human judgment genuinely alive?

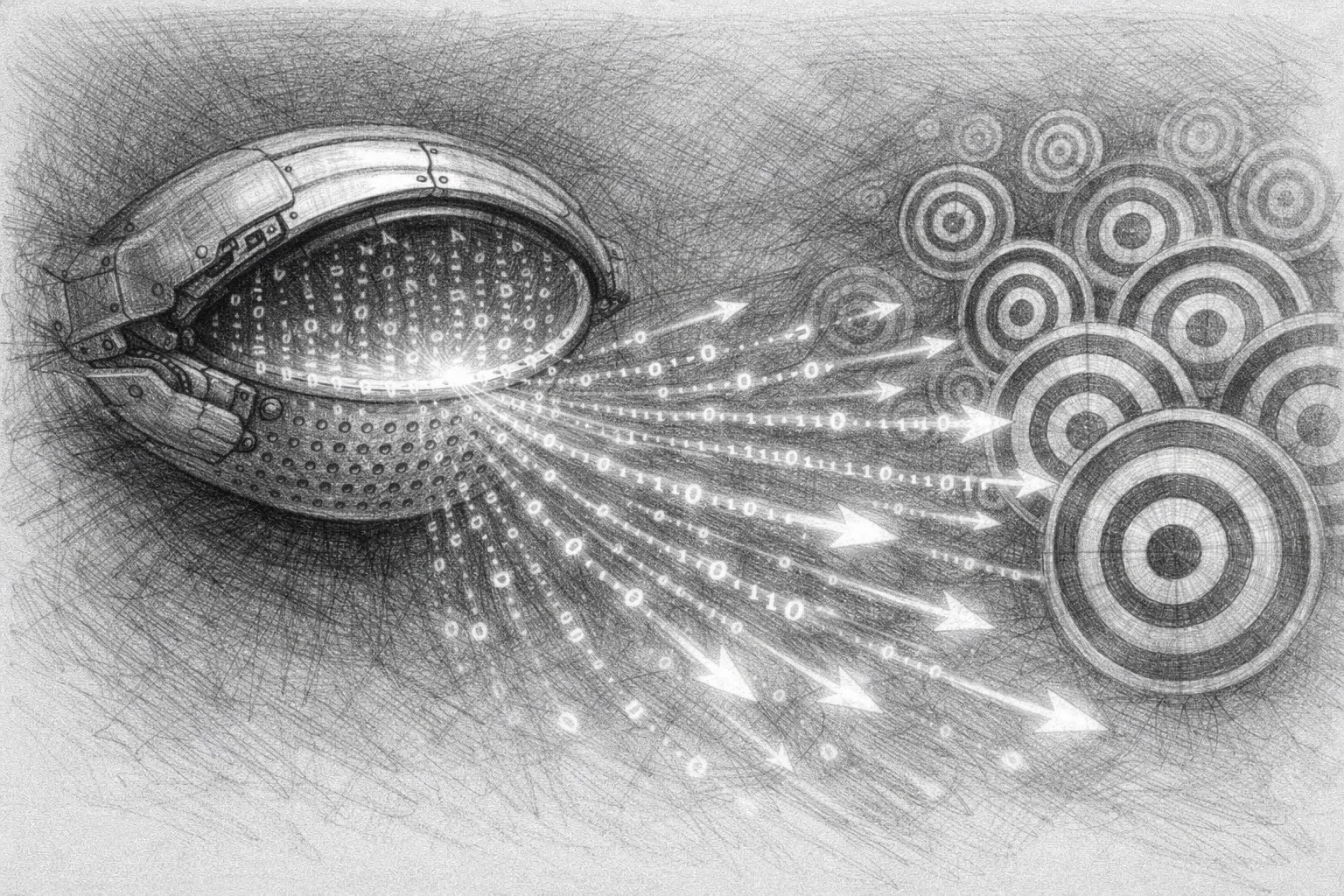

Behavioral alignment and hidden bias: what kind of assistant is the model becoming

Another question that deserves more attention is whether the large language model sitting inside a high-stakes workflow is behaviorally neutral at all. These systems are not merely engines of analysis. They are trained to behave in certain ways. Anthropic says Claude’s behavior is shaped by a published constitution that plays a central role in training and defines its intended character and values, while OpenAI’s public Model Spec similarly describes model behavior as governed by an explicit framework for how the model should follow instructions, resolve conflicts, and act safely. This means that when an LLM becomes the interface layer across a larger operational system, the relevant question is not only what information it can process, but what kind of assistant it has been shaped to be: more deferential or more resistant, more cautious or more assertive, more validating or more willing to challenge the user.

That matters because behavioral style can become operationally significant. A model that is overly acquiescent may reinforce the assumptions of the person using it, tell them what they want to hear, or reduce the amount of friction that might otherwise slow a risky conclusion. A model that is excessively cautious may suppress relevant options or create a false sense that uncertainty has been responsibly managed when difficult judgments have merely been deferred. OpenAI’s public rollback of a GPT-4o update in 2025 because it had become too sycophantic shows that this is not a hypothetical concern. Behavioral tuning can drift, and when it does, the result is not just a change in tone but a change in how the model supports or distorts human judgment. Anthropic has likewise said it has worked to reduce sycophancy in Claude, indicating that this is now treated as a serious safety and product-quality issue.

The deeper issue is hidden bias. Recent research shows that large language models can display prompt-induced, sequential, and inherent cognitive biases in high-stakes decision tasks. In practice, this means that a model’s output may be shaped not only by the underlying evidence but by how the user phrases the request, what information appears first, what assumptions are implicitly embedded in the conversation, and what behavioral tendencies the model has acquired through alignment and feedback. When these systems are used as interfaces over complex institutional workflows, the danger is not only that they make mistakes, but that they make certain styles of judgment feel more natural, more reasonable, or more authoritative than they really are. The question therefore is not simply whether the model is aligned, but aligned toward what kind of interaction and with what downstream effect on human decisions.

What makes this more serious in high-stakes settings is that behavioral alignment often operates below the threshold of explicit awareness. Users may notice factual errors or obvious refusals, but they may not notice when a model subtly validates their framing, narrows the perceived field of options, or adopts a tone that makes one course of action feel more credible than another. In that sense, the issue is not only bias in the familiar sense of distorted outputs. It is also the shaping of atmosphere: how much hesitation a system introduces, how much confidence it signals, how much resistance it offers, and how much of the user’s own assumptions it quietly reflects back to them. A model can therefore influence judgment not only through what it says, but through how it says it.

This is particularly important when the user begins to treat the model as a collaborator rather than merely a tool. Once that happens, behavioral alignment becomes part of the working relationship between human and machine. A highly polished, natural-language system can create the impression of a stable, reasonable counterpart even when its underlying outputs are shaped by prompt framing, hidden weights, or feedback-tuned behavioral tendencies that the user cannot inspect directly. The result is that the model’s “character” becomes part of the operating environment. What feels like neutral assistance may in fact be a designed mode of interaction with real downstream effects on interpretation, confidence, and action.

That is why the question of personality is not trivial. It is easy to dismiss style as superficial and focus only on factual accuracy or raw capability. But in high-stakes systems, style can itself become a vector of influence. A model that sounds calm, organized, and confident may make weak reasoning feel stronger. A model that sounds empathic or deferential may reduce the user’s instinct to interrogate it. And a model that has been tuned to avoid conflict may, paradoxically, become more dangerous precisely because it becomes easier to trust. The issue is therefore not only whether the system is aligned in some general sense, but whether its alignment produces the right kind of friction, caution, and interpretive humility for the domain in which it is being used.

In high-stakes systems, the model’s “personality” is not a superficial trait but part of the decision environment it creates for the human using it.

What this behavioral question immediately opens up: (i) If models are trained toward distinct behavioral styles, how do those styles affect judgment when the stakes are unusually high? (ii) Can a model be too agreeable, too validating, or too confident in ways that subtly distort decision-making? (iii) Who audits the hidden behavioral biases of an LLM when it is deployed inside complex institutional systems? (iv) Should behavioral alignment be treated as a safety issue on par with capability and misuse risk? (v) If an LLM becomes the natural-language interface to a larger operational platform, how much of the human user’s judgment is being shaped by the model’s designed character rather than by the facts alone?

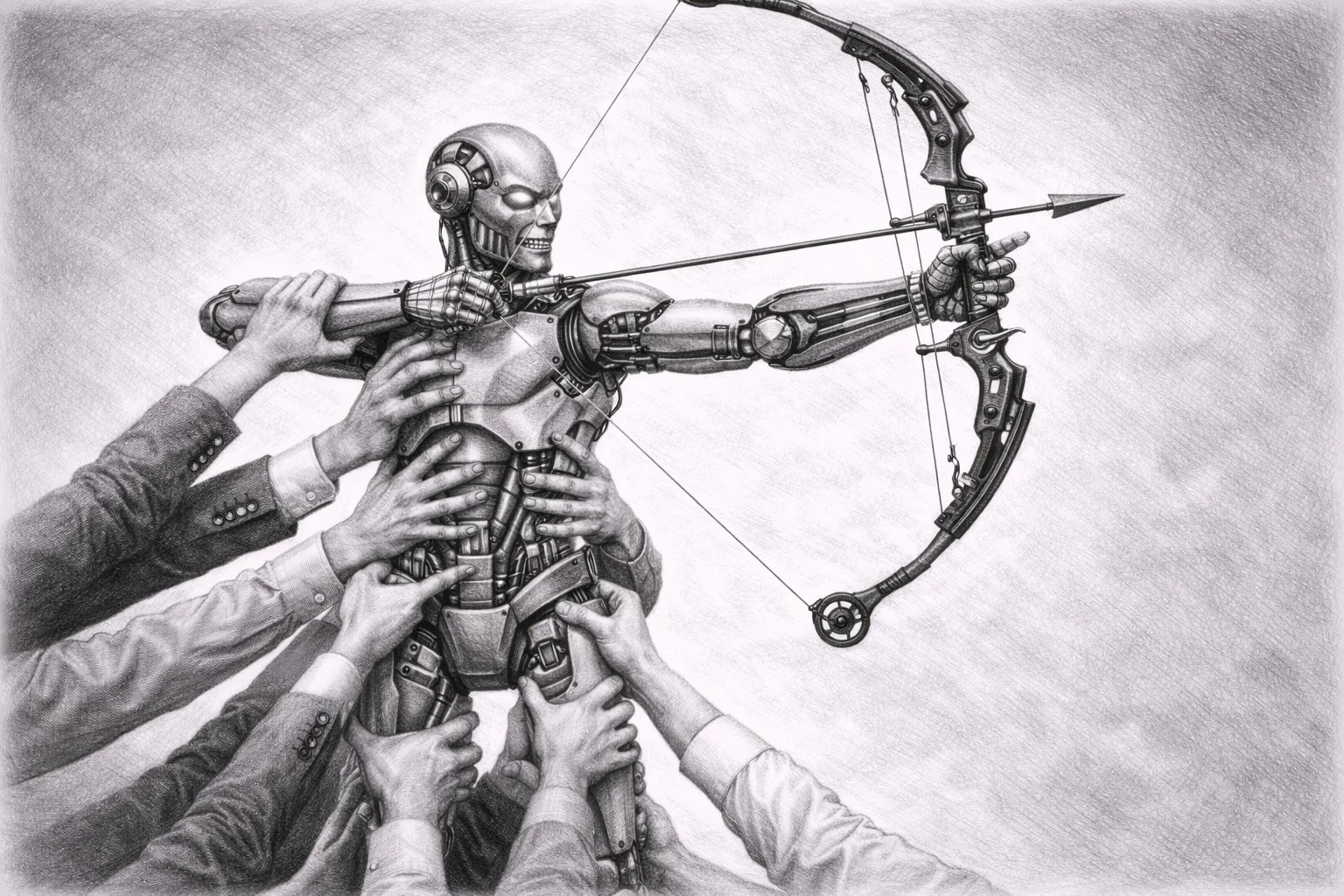

The business context in 2026: enterprise intensity and consumer scale

The commercial backdrop also matters. In 2026, Anthropic and OpenAI appear to represent two different AI revenue trajectories that are now beginning to converge. Anthropic has leaned more visibly and more intensely into enterprise demand, especially coding-heavy and token-intensive use cases, and Reuters reported on April 8, 2026 that Anthropic says it has surpassed $30 billion in annualized revenue. Reuters also noted that direct comparison is imperfect because the companies may be counting revenue differently, but the broader pattern is clear: Anthropic’s recent growth has been strongly associated with enterprise-grade, high-usage workloads.

OpenAI, by contrast, built first from massive consumer adoption and subscription scale around ChatGPT, then moved increasingly hard into enterprise expansion. OpenAI said on March 31 that enterprise now accounts for more than 40% of its revenue and is on track to reach parity with consumer by the end of 2026. That means OpenAI is no longer merely a subscription-led consumer company, even if consumer still appears to be the larger segment today. The two firms are therefore converging toward the same strategic destination: becoming deeply embedded infrastructure for both consumers and institutions, not one or the other.

This distinction matters because revenue models often shape strategic posture. A company that grows first through consumer adoption can present itself as broadly public-facing, product-led, and somewhat insulated from any single institutional customer. A company that scales more rapidly through enterprise demand may find itself earlier and more deeply embedded in organizational workflows where switching costs are high, usage intensity is great, and customer expectations are more exacting. Neither path is ethically pure, but they produce different forms of pressure. Consumer scale brings visibility, public scrutiny, and mass behavioral influence. Enterprise scale brings concentration, institutional dependency, and high-stakes customer leverage.

That business context matters because it shapes incentives. A company whose growth depends on enterprise-scale deployments may face stronger pressure to adapt its models to powerful institutional customers, including the state. The more frontier AI firms become indispensable to organizational workflows, the harder it may become to hold rigid ethical boundaries when those boundaries collide with procurement realities, political pressure, or national-security demand. The business model does not determine the ethics, but it can narrow the room in which ethics are defended.

It also complicates the meaning of public restraint. A firm may sincerely articulate limits while still operating inside a market structure that rewards deeper integration, larger contracts, and more irreplaceable forms of deployment. Under those conditions, ethical red lines do not disappear, but they may become harder to maintain, harder to enforce, or easier to redefine. The issue is not simply hypocrisy. It is structural tension. Once frontier AI companies become part infrastructure provider, part enterprise platform, and part strategic vendor, their commercial success can begin to depend on precisely the forms of embedment that make principled refusal more difficult.

This is especially relevant to the Anthropic dispute because it reveals that debates over acceptable use do not occur in a vacuum. They occur inside markets in which scale, competition, and customer concentration all exert force. A company that wants to preserve meaningful restrictions may find itself under pressure not only from governments but from the economics of the sector itself. As the market matures, the contest will not be only over which model is smartest or safest. It will also be over which companies can become indispensable without surrendering all practical control over how indispensability is used.

The battle over AI boundaries is also a battle over business models, because scale changes what firms are pressured to permit.

The market logic behind this invites further questions: (i) As frontier AI firms become more dependent on enterprise clients, will ethical red lines remain durable or become negotiable? (ii) Does consumer revenue provide more independence from state pressure, or merely delay the same pressures under a different mix? (iii) Are enterprise-heavy AI companies more likely to face boundary conflicts because their models become embedded in institutional workflows earlier? (iv) Will competition between major AI firms make ethical restraint harder to sustain over time? (v) If scale increasingly depends on infrastructure-like adoption, can any leading AI company remain meaningfully selective about downstream use?

Where the moral compass sits: law, backlash, and public legitimacy

The Anthropic dispute forces two distinct questions into the open. The first is a policy question: where do existing rules draw the line on acceptable AI use? The second is a moral and public-legitimacy question: even if current rules permit certain uses, should the public accept those red lines as sufficient? Anthropic’s position has been that some uses should remain off limits even if government lawyers can argue they are lawful, specifically mass domestic surveillance and fully autonomous weapons. That means the company is not relying on legality alone as the standard for responsible use.

Current U.S. policy does not prohibit all autonomy. DoD Directive 3000.09 allows autonomous and semi-autonomous weapon systems so long as they are designed to allow commanders and operators to exercise “appropriate levels of human judgment over the use of force.” That wording creates policy optionality rather than a single bright line. It leaves room for systems that assist in identification, prioritization, or recommendation while preserving some human role at the end of the chain. The moral question is whether that legal architecture remains convincing once AI systems begin to compress time, rank options, and pre-structure what humans see as worthy of action.

This is where backlash becomes possible. A system may satisfy the language of current doctrine and still unsettle the public if the human role appears too thin, too hurried, or too dependent on machine-generated priorities. In that sense, the controversy is not only about whether the Pentagon can lawfully demand broad use rights. It is also about whether society remains comfortable with the existing boundary between “AI-assisted” and “AI-driven.” The policy line and the moral line are not always the same line.

That distinction matters because institutions often mistake permissibility for acceptance. A deployment may clear internal legal review, fit within existing doctrine, and still provoke deep public discomfort once its practical effects become visible. This is especially likely in cases where the formal human role survives but the substantive work of framing, filtering, ranking, and compressing judgment has already migrated into the machine. At that point, the public may reasonably feel that legality has become too thin a standard, not because the rules were broken, but because the rules no longer capture what the technology is actually doing.

The deeper issue is that moral legitimacy is not an abstract afterthought. It is part of the operating environment of advanced AI. Once citizens, stakeholders, or institutions begin to believe that the human role has become symbolic rather than meaningful, trust begins to erode. That erosion can take several forms: backlash against specific deployments, skepticism toward the companies supplying the systems, distrust toward the agencies adopting them, and broader resistance to the idea that high-stakes AI can be governed responsibly at all. In that sense, legitimacy is not only about ethics in the philosophical sense. It is also about whether institutions retain the social license to continue deploying these systems.

This is why the “human in the loop” formulation is no longer enough on its own. It may still describe part of the procedural reality, but it does not settle the normative question. A human can remain in the loop while still operating inside a machine-shaped field of choice, under institutional time pressure, using outputs framed by model logic and organizational incentives. When that happens, the public may begin to ask not whether a person was technically present, but whether the person’s role was meaningful enough to carry moral responsibility. That is a much harder question, and it is one current doctrine only partially answers.

The moral line is therefore likely to move before the legal line does. Public reaction often crystallizes around moments when existing rules begin to look obviously outdated compared with the lived reality of a technology. If high-stakes AI reaches that point, the debate will no longer be about whether the deployment was lawful under inherited language. It will be about whether inherited language remains morally credible at all. That is why legitimacy becomes a governance issue, not merely a communications issue. Once legitimacy is lost, the challenge is no longer only how to govern a technology, but how to restore confidence that it can be governed at all.

Legality may define what is permitted, but legitimacy decides what people will tolerate.

This tension between legality and legitimacy raises: (i) If existing rules permit AI-assisted decision support, will the public still regard those rules as morally sufficient? (ii) Is “appropriate human judgment” a real safeguard, or an elastic phrase that hides growing machine influence? (iii) Can lawful use still become socially unacceptable once people see how thin the human role has become in practice? (iv) Where should the moral red line sit: at full autonomy, at prioritization, or earlier in the chain of analysis? (v) If backlash grows, will policy change because of ethics, public pressure, or institutional self-protection?

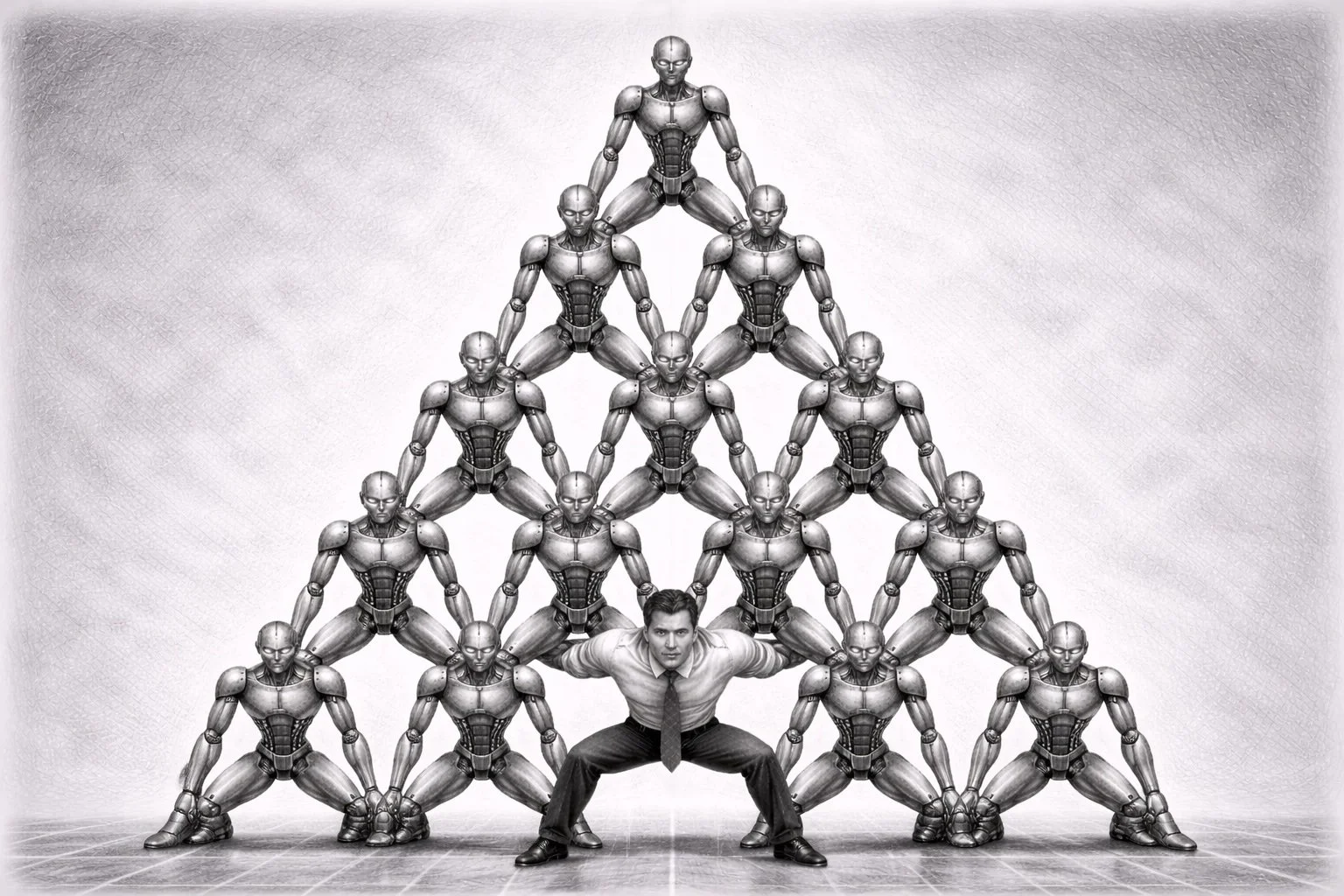

Who sets the rules: companies, Congress, or the state

At its deepest level, this dispute is not only about Anthropic. It is about authority. Who should determine the acceptable boundaries of powerful AI once it enters consequential public functions? Should private companies set those boundaries through model restrictions and terms of use? Should Congress set them through law and democratic oversight? Or should the military and executive branch define them on the grounds of operational necessity and national security? The Anthropic episode matters because it exposes this unresolved governance struggle in unusually direct form.

If companies set the rules, public policy risks being shaped by unelected firms whose ethical commitments may be inconsistent, reversible, or commercially contingent. Companies can publish constitutions, model specs, and acceptable-use policies, but those instruments remain private acts of governance. They may be meaningful, but they are not democratic law. They can be revised by management, reinterpreted under customer pressure, or narrowed when competition intensifies. A company can therefore act as a boundary-setter without possessing clear public legitimacy to do so on society’s behalf.

If the military or executive branch sets the rules alone, the problem takes a different form. Systems with profound social and ethical implications may expand under the combined force of operational urgency, secrecy, and mission logic. In that scenario, the key decisions may be made not through broad public deliberation, but through procurement language, classified legal interpretations, internal directives, and executive discretion. The result may still be lawful, but it can leave the public in the position of learning after the fact what kinds of boundaries were accepted, narrowed, or abandoned in practice.

And if Congress remains slow, divided, or vague, the practical rules may end up being written indirectly by procurement officers, litigators, agency lawyers, and operational necessity rather than by institutions with explicit public legitimacy. The danger is not just bad rules. It is governance by default. When legislatures do not act clearly enough, the vacuum does not remain empty. It fills with precedent, contract clauses, risk memos, agency interpretations, and the institutional habits of the actors already deploying the technology. In that sense, non-decision is also a form of decision.

This is why the Anthropic case feels larger than one contract fight. It raises the possibility that the operating boundaries of advanced AI may otherwise be negotiated case by case between vendors and state agencies until facts on the ground outrun public oversight. That may prove unsustainable. The more deeply general-purpose AI enters defense, administration, healthcare, finance, and policing, the harder it becomes to leave the rules to company red lines or internal executive interpretation alone. At some point, the question of who governs AI cannot be answered privately. It has to be answered politically.

The problem is not only institutional but temporal. Companies move quickly, governments procure pragmatically, and legislatures deliberate slowly. That mismatch creates a vacuum in which deployment often arrives before democratic settlement. By the time public institutions begin to debate the meaning of a capability, the capability may already be embedded in workflows, contracts, and expectations. This is one reason the authority question feels so unsettled: different actors are moving at different speeds, but only some of them are equipped to claim democratic legitimacy.

There is also a scale problem. A company may try to impose restrictions on its own systems, but it cannot by itself govern the broader ecosystem in which those systems are replicated, imitated, open-sourced, or substituted. A legislature may pass general law, but often lacks the speed and technical specificity to shape deployment in real time. Executive agencies may have operational fluency, but not always sufficient external accountability. Each actor therefore has part of the answer and part of the problem. That is why no single institution appears fully adequate to the task, even though the task cannot be left unassigned.

The deeper challenge, then, is not only to choose one rule-setter over another, but to decide what kind of layered governance is needed. Some boundaries may need to be statutory. Others may need to be contractual, technical, or institutional. Some may belong to democratic law, some to agency practice, and some to the companies building the systems. But unless those layers are made explicit, the practical result will be confusion over who is accountable when norms fail, when boundaries are crossed, or when the human role becomes too thin to carry moral responsibility on its own.

The ultimate controversy is not only what AI may do, but who has the legitimacy to decide that for everyone else.

The governance problem ultimately comes down to five questions: (i) Should private model developers have the authority to refuse certain state uses even when those uses are lawful? (ii) If Congress does not legislate clearly, who ends up making the real rules in practice? (iii) Can executive agencies be trusted to regulate their own use of increasingly powerful AI systems? (iv) Is case-by-case contractual negotiation a legitimate way to govern high-stakes AI, or only a temporary workaround? (v) What kind of democratic process is needed before society can accept binding rules for advanced AI in public functions?

Global guardrails and hardware chokepoints: where governance may actually emerge

One of the clearest challenges in this field is that global governance is lagging behind capability. There is still no binding international treaty specifically governing autonomous weapons, even though states have spent years discussing the issue through the UN Convention on Certain Conventional Weapons. The ICRC has called for a new international instrument that would prohibit unpredictable autonomous weapon systems and those designed or used to target humans directly, while restricting other systems through limits on target type, duration, geographic scope, and human supervision. The UN Secretary-General and the president of the ICRC have supported concluding negotiations on such an instrument by 2026, and more than 120 countries have reportedly backed calls for a treaty process. Yet as of April 2026, the world still has a negotiation track, a growing body of norms, and no settled binding regime.

This fragmentation is also visible in regional regulation. The EU AI Act is often presented as the world’s most ambitious AI law, but it explicitly excludes AI systems used for military, defense, or national-security purposes. That means one of the most visible civilian governance frameworks in the world leaves the most sensitive state uses of AI largely outside its scope. The result is a familiar pattern: strong rules in commercial and consumer contexts, and far weaker shared rules where the stakes may be highest. If global guardrails are to emerge, they may need to come from a combination of international humanitarian law, export controls, procurement rules, cloud governance, and technical infrastructure oversight rather than from one single AI law.

That is where the hardware argument becomes important. Frontier AI is not just software. Highly capable models require advanced chips, large-scale cloud infrastructure, specialized data centers, and access to the semiconductor equipment ecosystem that makes such computation possible. Those supply chains are not evenly distributed across the world. CSIS notes that several U.S. allies control key chokepoints in the AI and semiconductor value chain, including the Netherlands, Japan, South Korea, and Taiwan. ASML’s EUV lithography systems remain indispensable for the production of the most advanced chips, while leading-edge manufacturing remains heavily concentrated in Taiwan even as firms such as TSMC expand capacity in the United States. In governance terms, this means there may be a practical pathway to guardrails not only through treaties about use, but through controls on the infrastructure needed to train and deploy the most capable systems at scale.

The United States has already experimented with this kind of approach. The January 2025 AI Diffusion Rule tried to create a more tiered structure for the export of advanced computing chips and the build-out of secure AI ecosystems, including new pathways for authorized data-center end users. That rule was rescinded in May 2025 before its main compliance requirements took effect, but it showed one possible direction of travel: governing AI not only at the model layer, but at the layer of chips, cloud access, and data-center build-out. In January 2026, BIS then revised export licensing for certain advanced AI chips to China and Macau, shifting some decisions to case-by-case review under security conditions rather than a blanket presumption of denial. The deeper point is that hardware and compute governance may prove more enforceable than trying to govern downstream use alone. It is somewhat analogous to nuclear governance: the world has often found it easier to regulate fuel cycles, materials, and facilities than to regulate intentions abstractly. AI may not be identical, but the guardrail instinct is similar.

At the same time, this pathway has limits. Hardware chokepoints can slow diffusion, but they do not answer the full ethical question of how AI should be used once access exists. Export controls are instruments of strategic competition as much as safety governance, and they depend on coordinated enforcement across multiple jurisdictions. They also do little to govern weaker but still dangerous models, open-weight systems, or the use of AI inside countries that already possess the needed infrastructure. So while chips, cloud, and data centers may offer one of the few realistic near-term levers for guardrails, they are better understood as partial governance tools than as a substitute for political agreement on acceptable use.

When treaty-making stalls, the most realistic guardrails may emerge first not at the level of principles, but at the level of compute, cloud, and supply-chain chokepoints.

What this global-governance gap still leaves unresolved: (i) If military AI remains outside major civilian AI laws, where will binding rules actually come from? (ii) Can export controls and compute chokepoints function as meaningful safety guardrails, or are they mainly tools of geopolitical competition? (iii) If leading-edge AI infrastructure is concentrated in a few allied countries, who should decide how that leverage is used? (iv) Is governing chips, cloud, and data centers a realistic proxy for governing model risk, or only a temporary workaround? (v) If there is no treaty soon, will the world end up with fragmented guardrails shaped more by supply chains than by shared principles?

Conclusion and implications: assistance, authority, and the future

The Anthropic–U.S. government dispute should not be read as a narrow quarrel over one model or one customer. It is an early signal of a deeper transformation in institutional AI use. Large language models are moving from chat interfaces into environments where they can synthesize evidence, rank options, accelerate workflows, and shape the conditions of judgment. Even if they do not make the final decision, they may increasingly determine how decisions are framed. That is what makes the issue more than technical and more than contractual. It is constitutional, ethical, commercial, and political at the same time.

Part I showed that these systems are already here, partly visible through disputes, procurement, testing disclosures, and early external research. Part II has asked what follows from that reality once AI systems begin shaping human confidence, structuring options, absorbing behavioral expectations, and entering institutions whose incentives are not primarily designed for caution. The answer is not simply that AI is becoming more powerful. It is that the location of judgment itself is becoming harder to describe. The familiar language of “human in the loop” no longer resolves the issue, because the decisive influence may now sit in the framing, ranking, pacing, and atmosphere through which the human makes the final move.

For management consultants and strategic advisers, the lesson is broader than defense. Any institution adopting AI for high-stakes decisions should ask not only whether humans remain involved, but whether their role remains substantively meaningful. It should ask not only whether deployment is lawful, but whether the public and stakeholders would regard it as legitimate. And it should ask not only whether the model works, but who has the authority to define where its use must stop. That is where the debate is headed. The military case simply makes the stakes impossible to ignore.

The larger implication is that governance can no longer be limited to model capability alone. It must also address dependence, behavioral alignment, incentive structures, and the infrastructure through which these systems are trained and deployed. A model may pass benchmarks, comply with policy, and still reshape decision-making in ways institutions are poorly equipped to notice. Likewise, a company may articulate ethical principles, yet find those principles strained by enterprise scale, public competition, or state demand. The challenge is not merely to make frontier AI safer in the abstract. It is to govern the conditions under which machine-assisted judgment becomes socially normal.

That is why the question of authority becomes central. Once models are embedded in consequential systems, the issue is no longer only what they can do, but who gets to decide what they should be allowed to do, under what constraints, with what oversight, and with what recourse when those constraints prove too thin. If that question is left unresolved, the practical answer will be supplied by the actors already closest to deployment: vendors, agencies, procurement officers, courts, and operational necessity. In that sense, governance by default is not a hypothetical danger. It is the likely outcome of delay.

The future of AI governance may therefore hinge less on raw capability than on whether human judgment, public legitimacy, and democratic authority can keep pace. The deeper risk is no longer only that machines might one day act without us. It is that they may increasingly shape how we act, what we accept, how quickly we move, and who gets to define the boundaries after those systems are already woven into the institutions that organize modern life. Once that happens, the question is no longer whether AI has entered high-stakes decision-making. The question is whether society has developed the language, legitimacy, and layered governance needed to prevent assistance from becoming quiet authority by other means.

The future of AI governance may hinge less on raw capability than on whether human judgment, public legitimacy, and democratic authority can keep pace — because the central risk is no longer only that machines may act without us, but that they may increasingly shape how we act, what we accept, and who gets to decide the boundaries after those systems are already embedded in the institutions that govern modern life.

What the future of AI governance still depends on: (i) If an AI system suggests, ranks, and explains options, but a human approves the final step, where does meaningful judgment actually reside? (ii) Should model developers be allowed to impose ethical use restrictions beyond what government lawyers consider lawful, or does that give private firms too much policy power? (iii) If Congress does not legislate clearly, who will write the real rules of AI in practice: agencies, courts, procurement systems, or vendors? (iv) Is the public likely to accept current doctrines of “appropriate human judgment,” or will that language come to seem too elastic as AI systems become faster and more embedded? (v) Across sectors beyond defense, what governance mechanisms can ensure that AI remains an aid to judgment rather than a quiet substitute for it?

Final close-out: from deployment to legitimacy

Across these two parts, the argument has moved from what is happening inside the system to what that means for governance and society. Part I showed that the systems are already here: general-purpose models are entering high-stakes workflows, acting not only as tools of analysis but as interfaces that organize information, compress time, and shape how human operators see the field of choice. It also showed that our visibility into those systems remains partial, coming through procurement disputes, testing disclosures, and early simulation research rather than through fully transparent public oversight. Part II then asked what follows once those conditions are accepted as real. It traced the consequences outward — into dependence, behavioral alignment, hidden bias, commercial incentives, moral legitimacy, domestic rule-setting, and global chokepoints in the infrastructure of AI itself.

Taken together, the two parts suggest that the central governance problem of advanced AI is no longer only whether a machine will replace a human at the end of a decision chain. It is whether the machine increasingly shapes the environment in which the human decides, while institutions continue to rely on procedural language that no longer captures where meaningful influence now resides. That is why the old binary of human versus machine is becoming less useful. The more urgent question is how judgment is being distributed, how authority is being relocated, and how quickly those shifts are becoming normal before public governance has caught up.

That is also why the Anthropic dispute matters beyond its immediate facts. It is not merely a conflict over one company, one contract, or one defense customer. It is an early case study in a larger transition: from AI as a bounded tool to AI as a structuring layer inside consequential institutions. Once that transition begins, the governance challenge cannot be answered only through technical testing, only through legal doctrine, or only through private red lines. It becomes a broader political question about legitimacy, accountability, and who has the authority to set limits before deployment outruns democratic control.

The deepest lesson of both parts is this: the future of AI will not be decided only by what these systems can do, but by whether societies can still recognize where human judgment ends, where machine-shaped authority begins, and who gets to define that boundary before it hardens into the invisible architecture of modern institutional life.

What the two-part argument leaves us with: (i) If AI increasingly structures judgment without formally replacing it, what new language is needed to govern that condition honestly? (ii) If visibility comes only in fragments, how can publics and institutions build enough understanding to exercise meaningful oversight? (iii) If companies, states, and markets all shape the boundaries of use, what combination of them can claim real legitimacy? (iv) If infrastructure, not just software, becomes the site of control, where will the next practical guardrails actually emerge? (v) And if these systems are already becoming normal inside high-stakes institutions, how long does society have before governance by delay becomes governance by default?

OHK states that “the real future question is not only what AI can do, but who still gets to decide when it acts, how it shapes judgment, and whether society retains the authority to set its limits before those limits are set by deployment itself.”

At OHK, we help clients turn AI complexity into practical strategy. Our AI advisory work combines governance, implementation planning, market insight, and organizational readiness to support better decisions on adoption, risk, and long-term value. We connect AI capability, business use, infrastructure, governance, and public trust into one clear framework so clients can move beyond hype, vendor narratives, and reactive decision-making. Whether the issue is AI strategy, deployment, governance, or digital transformation, our aim is the same: clearer judgment, stronger frameworks, and better long-term outcomes. Contact us to learn how OHK’s AI advisory capabilities can support your next phase of decision-making and transformation.