Building the Institutional Architecture

of Partnership in Utilities

Part 2 — From Framework to Practice: How to Build a Future-Ready PRM Operating Model

This illustration captures the central argument of Part II: utilities do not build Partner Relationship Management through software alone, but through the deliberate design of an operating model. The paired figures working through diagrams, checklists, and systems suggest that partnership must be structured through discovery, segmentation, governance, performance visibility, and implementation rather than left to informal coordination. The surrounding infrastructure, data, and energy landscape reinforces the idea that utilities now operate within a dense institutional ecosystem that must be understood, organized, and operationalized. In that sense, the image reflects Part II’s core message that PRM becomes real only when relationship architecture is translated into disciplined practice.

Intro to the “Building the Institutional Architecture of Partnership” Trilogy: Utilities are entering a period in which relationships can no longer be managed as a peripheral function. In increasingly complex operating environments, success depends not only on assets, operations, and regulation, but on the institution’s ability to manage a wider ecosystem of regulators, government entities, contractors, technology providers, and community actors through a clear and disciplined model. This trilogy argues that Partner Relationship Management, or PRM, is becoming a core institutional capability for utilities. It is therefore about much more than communications or software. It is about how a utility structures the relationship layer around the ecosystem that shapes delivery, resilience, trust, innovation, and long-term value.

The trilogy follows a deliberate progression from diagnosis to design to digital and operational enablement. Part I explained why this matters by showing that utilities now operate in dense stakeholder and partner ecosystems and that informal relationship handling is no longer enough. Part II addresses how PRM should be designed and operationalized as an operating model built around mapping, segmentation, governance, journeys, measurement, and implementation. Part III examines how PRM should be enabled through the enterprise stack, including ERP, CRM, SRM, integration, and digital sovereignty.

Reading Time: 45 min.

All illustrations are copyrighted and may not be used, reproduced, or distributed without prior written permission.

Summary: This Part II examines how utilities can move from recognizing the strategic importance of Partner Relationship Management to actually building it as an operating model. It argues that PRM does not become real through aspiration, software, or isolated process fixes, but through a deliberate sequence of ecosystem discovery, operating-model design, governance, performance architecture, technology enablement, and institutional adoption. The article shows that utilities must first understand their relationship environment, then classify and govern it, and only afterward digitize and scale it. The overall arc is: the implementation trap → ecosystem discovery → operating-model design → governance → performance visibility → technology in the right place → operationalization and knowledge transfer → a maturity roadmap → PRM as a built capability rather than a concept.

The Implementation Trap: Why Institutions Fail When They Jump Too Fast

Tokyo Gas i NET, Tokyo, Japan: This is a strong example because they did not jump directly from operational pressure to full-scale deployment. The company first identified fragmented systems, inefficient maintenance practices, and slow response handling across the Tokyo Gas Group, then ran a proof of concept with junior engineers before building a full-scale service infrastructure. It also kept as much of the deployment in-house as possible in order to build long-term internal capability. That sequencing is exactly the point of this section: institutions do better when they validate the model and learn from a contained implementation before scaling technology across the enterprise.

Most institutions do not fail at partner relationship management because they lack intent. They fail because they compress the journey. They see that the external environment has become more complex. They recognize that relationships are now more strategically significant than before. They know coordination could be stronger, visibility could be better, and institutional consistency could improve. But instead of translating that recognition into a design sequence, they rush toward visible solutions. They ask for a platform, a dashboard, a workflow engine, a portal, a reporting layer, or a new set of engagement procedures. In doing so, they skip the most important question of all: what operating model is this technology or process actually meant to support? That is the implementation trap.

A future-ready PRM model is not built by starting with software and hoping governance will emerge around it. Nor is it built by drafting a high-level framework that never reaches the point of operational reality. It must be built in sequence. It begins with ecosystem discovery. It moves into operating-model design. It establishes governance and performance discipline. Only then does it translate into enabling systems, institutional routines, and day-to-day practice. This ordering matters because PRM is not a decorative overlay on top of existing operations. It is a way of structuring how relationships are identified, classified, governed, measured, and improved. If the institution does not define that logic first, any digital or procedural intervention will rest on ambiguity. It may still generate activity, but it will not generate coherence.

There is a common institutional temptation here. Visible tools create the appearance of progress. A software platform gives transformation a name, a budget line, a timeline, and a dashboard. A consulting framework gives it presentation value. A new process map gives it formal language. But none of these guarantee that the organization has answered the core design questions. If the relationship model remains unclear, then the intervention may simply formalize confusion rather than resolve it. That is why the first implementation discipline is restraint. Before the institution automates, it must understand. Before it standardizes, it must classify. Before it measures, it must define what success actually means. Before it assigns workflow, it must assign ownership. And before it speaks about integration, it must decide what kind of capability it is really trying to build.

The institutions that get this wrong are not usually careless. They are usually impatient. They are trying to solve a real problem quickly. But relationship complexity cannot be solved well by leaping over design. It must be worked through in a sequence that turns insight into architecture, and architecture into practice. That is where implementation really begins.

Institutions do not fail because they move too slowly, but because they move to solutions before they have designed the system those solutions must serve.

OHK has observed that many institutions do not struggle at the implementation stage because they lack commitment, but because they move too quickly from ambition to visible solutions without first establishing the model those solutions are meant to support. The warning signs usually appear early: (i) leadership asks for platforms, dashboards, or workflows before agreeing on the basic logic of the relationship environment, (ii) teams speak confidently about transformation while key terms such as ownership, escalation, and value remain undefined, (iii) process discussions advance faster than strategic clarity, (iv) software becomes a proxy for progress even when governance is still vague, and (v) institutions assume that formal activity is evidence of operating discipline. These patterns can create momentum on paper, but over time they expose a deeper weakness: the institution has tried to accelerate implementation without first designing what it is actually trying to implement. That is where execution begins to outrun architecture, and where many PRM efforts lose coherence before they have truly begun.

Start with Ecosystem Discovery: Visibility Must Come Before Design and Control

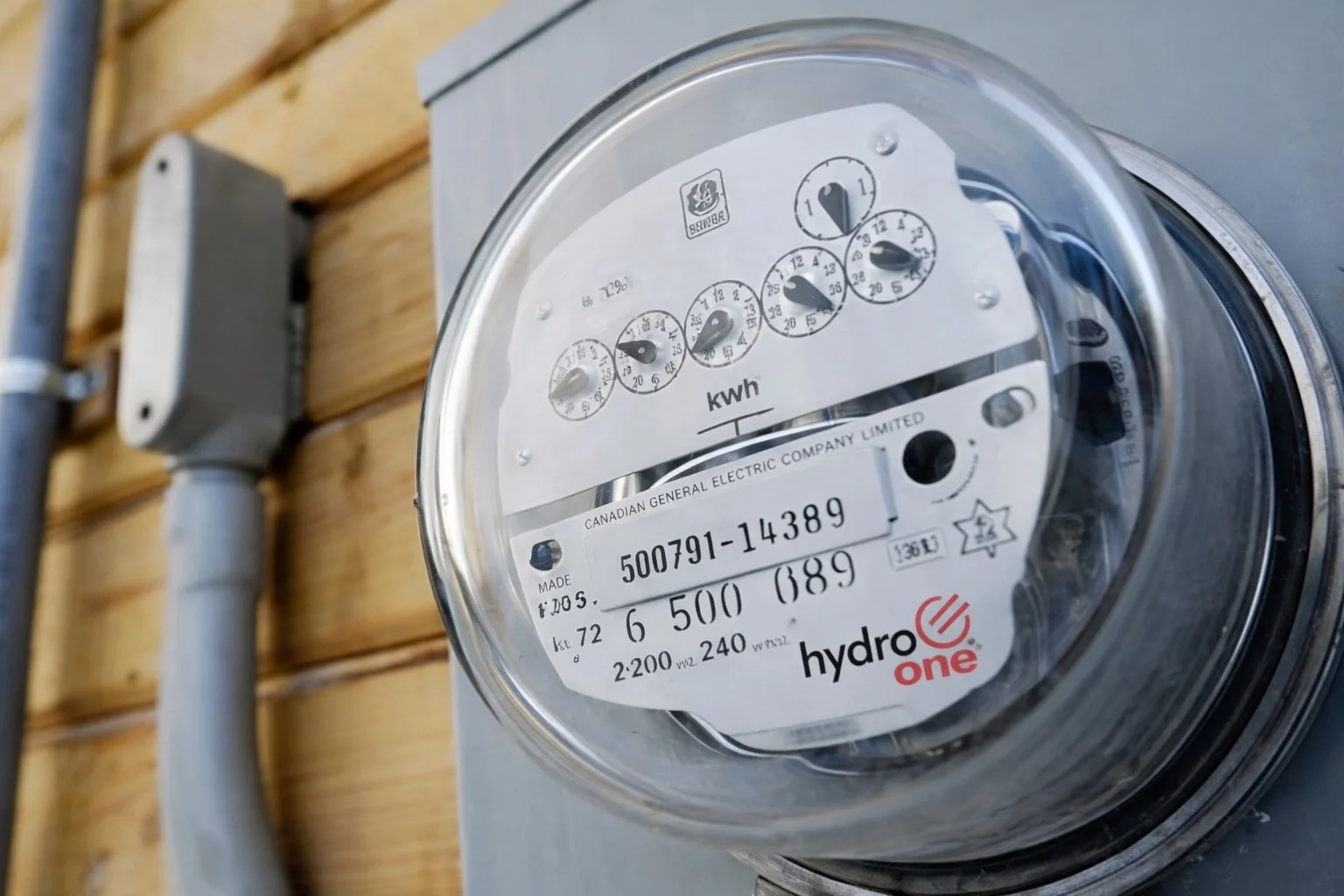

Hydro One, Ontario, Canada: In its 2024 sustainability reporting, Hydro One explains that it used input from stakeholders, Indigenous communities, partners, and businesses to identify the issues most important to the organization, including the energy transition. Its sustainability materials also include tables showing how it engaged and what topics were discussed with different stakeholder groups. This is important because it shows an institution turning broad external complexity into a structured view of concerns, priorities, and relationship patterns before moving further into strategy and operating decisions.

Before an institution can manage relationships well, it must understand the shape of its relationship environment. This sounds obvious, but in practice many organizations do not possess a truly useful view of their ecosystem. They may know many of the actors individually, but not the structure of the ecosystem as a whole. Relationship intelligence is scattered across departments. Categories are inconsistent. Some actors are heavily visible in one business unit and nearly invisible in another. Internal teams often hold very different assumptions about who counts as a partner, what outcomes matter, where friction sits, and which relationships are strategically critical. A serious PRM effort therefore begins with ecosystem discovery.

This is not a clerical exercise. It is the first act of institutional diagnosis. It asks the organization to identify all relevant internal and external actors, and then to analyze them in ways that are useful for management rather than merely descriptive. Mapping alone is not enough. The institution must understand patterns, significance, interdependence, and current failure points. That process typically includes stakeholder and partner mapping, multidimensional categorization, influence and interest analysis, interviews, workshops, maturity assessment, and gap identification. But what matters is not the checklist itself. What matters is that discovery transforms partial knowledge into an institutional view.

At this stage, the key questions are diagnostic:

Which relationships are strategic and which are routine?

Which are under-governed?

Which are over-serviced relative to value?

Which are highly person-dependent?

Which involve repeated friction across touchpoints?

Where are internal teams duplicating effort?

Which relationships already work well and can serve as reference models?

These questions shift the organization from description to insight. They reveal not just who is present in the ecosystem, but how the current model is performing and where it is failing. This stage is especially important because institutions often believe they know their ecosystem better than they actually do. Familiarity can hide structural blindness. A team may know its own relationships very well, while having little visibility into how other parts of the institution are engaging the same actors. Leadership may assume alignment because relationships appear stable, even when that stability is held together by informal workarounds and personal relationships rather than by formal design. Ecosystem discovery breaks that illusion.

It also helps expose something fundamental: not every relationship that appears important is strategically important in the same way. Some relationships matter because of statutory authority. Some because of delivery dependence. Some because of innovation potential. Some because of political sensitivity. Some because they shape legitimacy, trust, or reputational exposure. Some because they sit at the center of multiple workflows or risks. Unless those distinctions are surfaced early, the institution will later design a model that is too generic to be useful. This is why visibility must come first. Institutions cannot govern what they have not clearly classified. They cannot assign ownership to what they have not yet understood. They cannot build journeys for relationships that still exist only as scattered contacts. And they cannot build performance architecture for a relationship environment they have not yet made legible to themselves.

In that sense, discovery is not pre-work. It is the first layer of the operating model itself.

You cannot govern an ecosystem you have not first made visible to yourself.

OHK has observed that institutions often believe they understand their relationship environment long before they have made it visible in a form that can actually support management. In many cases, familiarity is mistaken for clarity. The warning signs are usually telling: (i) different departments hold incompatible views of who the important external actors really are, (ii) relationship data is dispersed across teams with no shared institutional picture, (iii) categories are descriptive rather than analytically useful, (iv) actors who are central to one function remain nearly invisible to another, and (v) leadership assumes the ecosystem is understood because key names are known, even though patterns of dependence, friction, and overlap remain unmapped. These weaknesses are rarely dramatic at first. But over time, they reveal that the institution is trying to design from partial knowledge. That is why visibility must come before design: without a shared view of the ecosystem, the model that follows will almost always be too generic, too uneven, or too blind to the real sources of complexity.

Design the Operating Model: Segmentation, Journeys, and Roles Must Work Together

Ausgrid, Sydney, Australia: Ausgrid shows how engagement can be translated into a more deliberate operating logic rather than remaining a consultation exercise. In its FY24 Sustainability Report, Ausgrid states that it had completed more than two years of extensive engagement on what customers and stakeholders value and what their future priorities are, and that this process informs updates to its annual budget, business plan, and associated operational strategies. That matters because it shows the institution using external insight to shape the design of how it plans and operates, not simply how it communicates. In other words, engagement is feeding the model itself.

Once the ecosystem is visible, the institution can move into the heart of the work: operating-model design. This is where PRM becomes concrete. It is where the institution decides how relationships should work, what differentiates one category from another, how interactions should evolve over time, and how internal accountability should be structured. Without this stage, PRM remains a concept. With it, PRM becomes a model that can actually be run. The first design decision is segmentation.

Not all partners should be managed in the same way, because not all relationships create the same type of value or risk. A future-ready PRM model defines meaningful categories and often tiers within them. Those categories may reflect statutory position, strategic criticality, service dependence, regulatory relevance, innovation potential, public interface, delivery importance, or reputational sensitivity. Some institutions will need only a few clear segments. Others will require a more layered framework. What matters is not complexity for its own sake, but meaningful differentiation. Segmentation matters because it determines almost everything downstream. Once the institution knows what type of relationship it is managing, it can decide what level of governance is appropriate, what service expectations should apply, how frequently engagement should occur, what form of escalation is needed, what data should be captured, and which performance metrics are relevant.

The second design decision is tiering. Even within the same category, not every relationship has equal weight. Two suppliers may both fall within a strategic segment, but one may be far more critical to service continuity than the other. Two public-sector relationships may both matter, but one may require regular executive attention while the other remains primarily operational. Tiering introduces disciplined difference within categories. It helps the institution avoid both over-management and under-management.

The third design decision is lifecycle definition. Relationships are dynamic. They are identified, assessed, onboarded, activated, governed, reviewed, improved, and sometimes exited. A robust PRM model defines these lifecycle stages intentionally and clarifies the decisions, handoffs, service expectations, and accountability points within each one. This is more than workflow design. It is a way of reducing friction and increasing institutional consistency. When lifecycle stages are clear, partners experience less confusion, teams know what to do at each step, and escalation becomes less improvised.

The fourth design decision is touchpoint architecture. This is one of the most hidden weaknesses in institutions. Partners often encounter different teams, systems, messages, and expectations depending on where they enter the organization. A mature PRM model designs touchpoints consciously. It clarifies who engages when, through which channel, with what purpose, and according to what cadence. It asks not only how many interactions occur, but how coherent those interactions feel to the external actor and how well they align internally.

The fifth design decision is role clarity. Without explicit ownership, PRM quickly becomes everyone’s responsibility and therefore no one’s discipline. The operating model must define who owns strategic relationships, who coordinates operational ones, who has escalation authority, who monitors performance, who manages compliance, and how cross-functional collaboration will occur. In complex institutions, this often means distinguishing between relationship owner, process owner, governance owner, and executive sponsor. These roles should not blur into one another. They should complement one another.

The sixth design decision is engagement logic. This is where the institution translates category and lifecycle into behavior. What kind of engagement is appropriate for each relationship type? Which relationships require regular reviews? Which require structured communications? Which need formal consultation channels? Which require issue-tracking and escalation? Which can remain relatively lightweight? These decisions determine whether PRM becomes a useful operating logic or an overbuilt administrative burden.

This entire stage is the point at which PRM stops being a broad aspiration and becomes a design discipline. The organization is no longer just saying that relationships matter. It is deciding how they should be classified, governed, and experienced over time. That is the difference between recognizing complexity and building a model capable of managing it.

PRM becomes real only when segmentation, journeys, and roles stop existing separately and begin to work as one operating model.

OHK has observed that many organizations produce relationship frameworks that appear sophisticated on paper but break down in practice because the elements of the operating model were never designed to work together. The early signs are often easy to miss: (i) segmentation exists, but it does not meaningfully shape governance or service expectations, (ii) lifecycle stages are named without clarifying decisions, handoffs, or accountability, (iii) journeys are mapped without addressing who owns the touchpoints within them, (iv) role titles are assigned without distinguishing between relationship ownership, governance ownership, and executive oversight, and (v) the model describes how relationships look conceptually but not how they should work operationally. These gaps can remain hidden while the framework is still new. But over time, they expose a deeper weakness: the institution has built separate design components without turning them into a coherent operating model. That is where PRM begins to look complete in presentation, but incomplete in execution.

Governance Gives PRM Credibility: Without Decision Rights, Models Remain Aspirational

Ausgrid Customer and Stakeholder Consultative Committees, Sydney, Australia: Ausgrid’s Customer and Stakeholder Consultative Committees offer a useful governance example because they formalize where stakeholder input sits and what it is expected to influence. Their mandate is intended to help Ausgrid better understand stakeholder needs and views and consider those perspectives in decisions, and that it serves as the main body for customer and external stakeholder input on corporate strategy, regulatory proposals, policies, service plans, and service delivery. This is valuable because it shows that engagement becomes more credible when it is anchored in a defined forum, with a stated purpose, scope, and role in decision-making.

If segmentation defines difference, governance defines order. This is the stage at which many otherwise promising PRM efforts weaken. Institutions create categories, draft journeys, and design touchpoints, but leave the governance layer vague. They define what should happen, but not who has authority. They assign activities, but not accountability. They recommend dashboards, but not review routines. The result is a model that looks coherent on paper but fails in practice because no one is clearly responsible for maintaining, enforcing, or adapting it.

A strong PRM model therefore needs explicit governance. That means clear decision rights. Who approves exceptions? Who owns strategic relationship categories? Who can escalate a conflict? Who decides when a relationship changes tier? Who is accountable for cross-functional coordination? Who can resolve disputes between units that are approaching the same actor differently? These are not secondary details. They are what make the model governable.

Governance also requires escalation pathways. When relationships become strained, when obligations are not being met, when risk rises, or when multiple internal priorities collide, the institution cannot rely on informal access alone. It needs defined escalation routes that are proportionate, visible, and understood. Escalation should not be a sign that the model has failed. It should be part of the model’s design.

The same is true for conflict resolution. Relationships create value, but they also create disagreement. Different units may want different outcomes from the same partner. External actors may resist demands, challenge expectations, or escalate issues of their own. Without a governance structure for resolving these tensions, relationship management becomes personality-driven and reactive. A mature PRM model gives conflict somewhere to go.

Governance also has a risk dimension. Certain relationships create operational risk, reputational risk, compliance risk, continuity risk, or political exposure. A strong PRM model identifies these risks explicitly and defines where they sit. Which risks are owned by the relationship owner? Which must be escalated to a governance forum? Which belong in compliance review? Which require executive sponsorship? Unless risk is embedded in the design, governance remains too abstract to be useful.

Then there is compliance. In many institutions, relationship management fails because it is treated as soft, while compliance is treated as hard. But in reality, the two must work together. Certain relationship types require policy discipline, documentation, approvals, auditability, and review. A future-ready PRM model does not separate governance from compliance as though one were relational and the other procedural. It recognizes that sustainable relationship management requires both.

Cross-functional ownership is equally important. In complex institutions, no single function “owns” the external environment in total. Legal, operations, transformation, procurement, public affairs, executive leadership, and service functions may all play roles. Governance therefore has to do two things at once: it must create enough central structure to ensure consistency, while preserving enough distributed ownership to remain workable in practice.

Good governance protects the institution from two opposite dangers at once. On one side is chaos, where every relationship evolves differently and inconsistently. On the other side is rigidity, where excessive centralization slows action and discourages initiative. Effective PRM governance strikes a balance. It standardizes where consistency matters and allows flexibility where context requires it. This is why governance is not a bureaucratic add-on. It is what gives PRM credibility. It signals that relationship management is not a soft function at the edge of the institution, but a managed capability with rules, escalation discipline, and institutional consequence.

UK Power Networks, London, United Kingdom: UK Power Networks is a strong example of making relationship performance visible because it does not treat engagement as an invisible background activity. In its 2023/24 Annual Review, the company says it will report annually on the outcomes of its stakeholder engagement programme through an Ongoing Engagement Report, and it also links stakeholder-informed activity to visible outcomes such as action plans to reduce supply-chain emissions and the use of tools like the Local Net Zero Hub by local authorities. This is useful because it shows that engagement is not only happening; it is being connected to outcomes, reviewed publicly, and made available as part of a continuing management and reporting discipline.

Without decision rights, escalation paths, and accountability, relationship management remains aspiration rather than discipline.

OHK has observed that relationship models often lose force not because they are poorly intentioned, but because governance remains too vague to carry real institutional authority. The warning signs tend to surface in familiar ways: (i) roles are described, but decision rights remain unclear, (ii) escalation exists informally but not through agreed pathways, (iii) conflicts are managed case by case rather than through a repeatable structure, (iv) compliance and risk are treated as adjacent concerns rather than embedded features of the relationship model, and (v) cross-functional collaboration depends on goodwill more than on defined accountability. These weaknesses may still allow the institution to function for a time, especially when relationships are stable. But under pressure, they reveal that the model lacks governance credibility. At that point, PRM begins to look like guidance rather than discipline. That is why strong governance is not administrative excess. It is what turns relationship management into a capability the institution can actually enforce, sustain, and trust.

Make Performance Visible: Relationships Improve Only When Performance Can Be Seen

United Utilities, Warrington, England: United Utilities is a useful example because it makes stakeholder input visible in relation to performance commitments, not just engagement activity. In its 2024 Annual Report, the company says that customer and stakeholder engagement directly informed the development of its business plan, including ambition and performance commitments on water supply, customer experience, affordability, biodiversity, and carbon/net zero. It also describes structured engagement formats, independent challenge through YourVoice, and board-level confidence that the plan reflected stakeholder priorities. This makes United Utilities a strong fit because it shows that relationship management becomes meaningful when insight is translated into measurable commitments, reviewed through structured mechanisms, and tied to visible outcomes rather than left at the level of consultation alone.

Institutions often say that partnerships are important, but cannot say with confidence which partnerships are performing well, which are under strain, and which are absorbing effort without creating strategic value. That gap persists because they have not turned relationship management into a measurable discipline. A future-ready PRM model therefore needs a clear performance architecture. This is more demanding than tracking activities. Counting meetings, communications, or engagement events may produce volume, but not necessarily insight. Performance architecture asks a harder question: how do we know whether a relationship is working?

The answer will vary by segment. The metrics that matter for a regulator are not the same as those that matter for a strategic supplier, a technology collaborator, a government entity, or a community-facing actor. That is why a serious PRM model distinguishes among different performance dimensions. These often include responsiveness, issue resolution, quality of coordination, strategic contribution, trust, risk exposure, satisfaction, continuity, compliance, value creation, and the efficiency of journeys and touchpoints. Some of these can be measured quantitatively. Others require qualitative judgment. Both matter.

The important thing is not to reduce relationship measurement to simplistic dashboards that privilege what is easiest to count. The point of measurement is not to make PRM look numerically sophisticated. It is to make relationship performance visible enough to manage. Visibility changes behavior. Once performance becomes visible, weak relationships can be addressed earlier. High-performing models can be replicated. Segment-level patterns can be compared. Escalation can be based on evidence rather than sentiment. Review discussions become more disciplined. Internal teams begin to understand that relationship quality is not a matter of impression alone, but something that can be observed, discussed, and improved.

A strong PRM model therefore needs not only KPIs, but also dashboard logic. What should be shown at what level? Which indicators matter for operational teams? Which for leadership? Which for governance forums? What should be tracked continuously, and what should be reviewed periodically? Which insights should trigger intervention? Dashboards without review logic become decorative. Review logic without visible indicators becomes subjective. The two must work together.

This also means distinguishing monitoring from reporting. Monitoring is about managing relationships actively while they are happening. Reporting is about documenting outcomes, trends, and accountability over time. Both matter, but they serve different functions. Institutions often conflate them, which leads to PRM that is either over-reported and under-managed, or actively managed but never institutionalized.

Then there are feedback loops. PRM is not a one-time design exercise. It is a managed system that must learn. The institution should gather insight from partners, internal teams, performance data, issue logs, governance reviews, and operational experience, then use that information to refine journeys, strengthen protocols, adjust segmentation logic, and improve role clarity where needed. This is how the model remains relevant as the ecosystem evolves.

That last point matters. Relationship environments do not stay still. New actors emerge. Strategic priorities shift. Regulatory pressures change. Delivery dependencies deepen or weaken. A static model quickly becomes obsolete. A mature PRM capability therefore includes not only performance measurement, but continuous improvement as a design principle. That is what makes performance architecture so important. It turns PRM from a well-meaning structure into a learning system.

Relationships improve only when performance becomes visible enough to manage, not merely important enough to discuss.

OHK has observed that institutions often claim relationships are strategic while still managing them through assumptions, sentiment, and activity counts rather than through visible performance discipline. The warning signs are usually quite clear: (i) meetings, interactions, and outreach are tracked, but the quality or value of relationships is not, (ii) dashboards favor what is easy to count rather than what is strategically meaningful, (iii) relationship reviews rely on anecdote more than on structured indicators, (iv) underperformance is recognized late because no one has defined what healthy performance looks like, and (v) feedback is gathered occasionally but not converted into continuous improvement. These patterns create the impression of oversight without the substance of management. Over time, they reveal a deeper issue: the institution is active in its relationships, but not yet visible to itself in how those relationships perform. That is why performance architecture matters so much. Once relationship quality can be seen, it can be reviewed, improved, and governed with much greater seriousness.

Put Technology in the Right Place: Enable the Model, Do Not Let It Define the Model

E.ON UK, Coventry, United Kingdom: E.ON UK is a useful example for this section because its technology story is rooted in operational need rather than in software fashion. Microsoft reports that E.ON UK moved away from spreadsheet-based and manual processes by using Dynamics 365 Customer Service, Dynamics 365 Field Service, and related tools to support office staff and field technicians, with 1,500 technicians using the Field Service mobile app to manage appointments. This works well here because the point is not simply that new software was adopted. The point is that technology was used to support a clearer service model, better coordination, and more manageable field operations. The platform enables the work; it does not define the institutional logic on its own.

Only after these foundations are established should the institution move decisively into technology. This is one of the most misunderstood stages in PRM. Technology matters enormously, but it matters in the right place. It should enable the model, not define it. The right platform can support partner records, segmentation logic, workflow execution, issue tracking, dashboards, collaboration, document integration, and visibility across the enterprise. It can reduce dependence on inboxes, spreadsheets, fragmented records, and informal memory. It can make relationship intelligence searchable, usable, and durable.

But none of that changes the basic truth: technology is downstream of operating design.

A platform can support categories. It cannot decide what the categories should be.

A workflow engine can automate process. It cannot define the institutional logic that process should reflect.

A dashboard can display indicators. It cannot determine what success should mean for different relationship types.

A case-management tool can route issues. It cannot decide who should own escalation or what governance should apply.

Software can digitize logic. It cannot invent logic on behalf of the institution. This is where many institutions go wrong. They sense that coordination is weak, visibility is poor, and external relationships are becoming more important. So they move quickly into solution mode. They ask for a platform, a portal, a dashboard, or a workflow tool. But if the underlying model remains vague, the technology only digitizes ambiguity. It creates a visible layer of activity on top of unresolved design questions.

That is why the most intelligent PRM efforts begin not with vendor demonstrations, but with operating questions.

What kinds of relationships define the institution’s ecosystem?

Which are strategic, and why?

What ownership models are required?

What journeys and touchpoints matter most?

Where are the current failures?

What governance is missing?

What should be measured?

What internal behaviors must change before any platform can add real value?

These are the main questions. Tools, platforms, and features are the secondary ones. When institutions reverse that order, technology does not create clarity. It scales ambiguity. This is also why technology selection should focus on fit rather than spectacle. The right question is not, “Which platform has the longest feature list?” It is, “What technology environment best enables the model we have defined?” Sometimes that will be a dedicated PRM layer. Sometimes it will be a combination of existing systems, workflow tools, analytics, and governance routines. Sometimes the right answer is phased enablement rather than immediate full-scale implementation.

What matters is coherence, usability, integration, and the institution’s capacity to sustain the solution over time. A common mistake is to overestimate the transformational power of software while underestimating the cultural and operational work required for adoption. A platform can be implemented quickly and still fail if roles remain unclear, data ownership is disputed, leadership does not reinforce usage, and governance forums do not use the outputs meaningfully. This is why technology is not the starting point. It is the amplifier that matters once the architecture is already clear.

Software does not create clarity; it only amplifies the clarity, or confusion, already built into the model.

OHK has observed that many institutions delay real progress when they ask technology to solve design questions that should have been settled before any platform discussion begins. The early warning signs are often familiar: (i) digital tools are introduced before relationship categories, ownership, and governance have been defined, (ii) leaders expect platforms to create visibility where the institution has not yet agreed what should actually be measured, (iii) workflows are automated before the underlying process has been made coherent, (iv) teams assume that more data will solve problems that are fundamentally about unclear operating logic, and (v) software selection advances faster than institutional agreement on what the model is meant to achieve. These patterns create visible momentum, but not necessarily useful capability. Over time, they reveal that technology has been asked to substitute for design rather than support it. That is why software can support logic, but it cannot invent it, and why institutions that place technology too early often end up digitizing ambiguity rather than resolving it.

Operationalize and Transfer Capability: A Model Is Real Only When the Institution Can Run It

Chatham x Lakeshore Limited Partnership / Hydro One, Ontario, Canada: A practical example of operationalization can be seen in Hydro One’s Chatham x Lakeshore Limited Partnership application materials, which state that the project’s in-service advancement was supported by early and meaningful engagement and partnership with local Indigenous communities and by a collaborative approach on project planning with residents and community stakeholders through the Environmental Assessment process, allowing local needs to be integrated into planning. This is useful because it shows that relationship work is not complete when consultation happens. It becomes meaningful when it changes planning, reduces friction, supports agreement-making, and helps the institution carry the model through to implementation in a durable way.

A PRM model is not real because it has been designed. It becomes real when the institution can run it reliably. This is where operationalization matters. Operationalization means translating framework into behavior. It means implementing processes, socializing roles, validating workflows, training teams, documenting standards, setting up governance forums, launching dashboards, and embedding review cycles into regular management practice. It means turning architecture into routine. This stage is often underestimated because it looks less dramatic than strategy design or technology selection. But in reality, it is the stage at which the model either becomes institutionalized or remains performative.

A future-ready PRM capability therefore requires adoption discipline. People must understand what has changed and why. Relationship owners must know what is expected of them. Governance forums must know what decisions they are meant to make. Operational teams must understand how journeys work in practice. Leadership must reinforce the model not only rhetorically, but behaviorally. If internal incentives and habits remain unchanged, then the framework may exist on paper while the institution continues to function through older habits.

This is also where documentation matters. Playbooks, role definitions, process documentation, governance protocols, and training materials are not administrative accessories. They are part of how capability becomes transferable and durable. If the model cannot be explained, taught, and repeated, it cannot survive leadership changes, staff movement, or organizational restructuring.

Then there is knowledge transfer. This deserves special emphasis. If the model works only while consultants remain present, then the institution has not built a capability. It has rented one. A mature implementation plan therefore includes capability transfer from the beginning. Internal teams must learn not only how to use the model, but how to maintain it, evolve it, and govern it after implementation support ends. That means workshops, guided adoption, co-design with internal teams, practical training, and handover that goes beyond static documents. It also means designing for internal ownership early enough that the institution does not experience the framework as something imposed from outside.

A phased rollout is often wiser than immediate full deployment. Not every relationship category needs the same level of maturity at once. Some institutions benefit from piloting the model in one segment, one function, or one strategic relationship group before scaling it further. What matters is not speed for its own sake. It is institutional absorbability. The key test of operationalization is simple: can the institution continue to run the model when the original design team steps away? If the answer is yes, then PRM has moved from concept to capability. If the answer is no, then more design work may still be needed, but more importantly, more institutionalization is required.

This is the threshold between designing a framework and building an enduring operating discipline.

A framework is not a capability until the institution can run it without leaning on the people who designed it.

OHK has observed that many transformation efforts appear complete at the moment of delivery, only to weaken soon afterward because the institution was never truly prepared to run the model on its own. The signs are usually practical rather than dramatic: (i) teams can describe the framework but do not yet know how to apply it consistently in daily work, (ii) documentation exists, but roles and routines have not been socially adopted, (iii) governance forums are defined but not yet embedded into management practice, (iv) training focuses on awareness rather than real capability transfer, and (v) the institution still depends on external advisors to interpret, maintain, or adapt the model. These conditions often go unnoticed while implementation energy is high. But over time, they expose a decisive gap: the organization has received a framework without fully internalizing it as a repeatable capability. That is why operationalization is the real test of seriousness. If the institution cannot run the model after handover, then the work has not yet become durable.

A Practical Maturity Roadmap: Visibility, Control, and Optimization Must Follow in Sequence

Hydro One, Ontario, Canada: Hydro One also offers a useful maturity illustration because its materiality work shows the progression from visibility to institutional prioritization. The company explains that its materiality assessment identified the sustainability topics that matter most to stakeholders, partners, and the business, and that these topics form the basis for its sustainability strategy and reporting. In practical terms, this is exactly what a maturity roadmap should do: first make the environment visible, then define what matters, and only afterward build more structured control and performance logic around it. It is a useful reminder that institutions do not optimize relationships well until they first decide, with discipline, which relationship issues are most material.

A useful way to think about PRM implementation is through a three-stage maturity roadmap.

The first stage is visibility: At this stage, the institution maps its ecosystem, identifies major pain points, creates meaningful categories, and gains a baseline understanding of current maturity. It begins to see where relationships are fragmented, where ownership is unclear, where dependency sits, and where coordination is failing. The goal at this stage is clarity.

The second stage is control: Here the institution defines segmentation, lifecycle stages, journeys, governance, roles, escalation logic, and performance indicators. It introduces discipline into relationships that were previously managed inconsistently. It creates standards without trying to over-engineer every interaction. The goal at this stage is coherence.

The third stage is optimization: Only here does the institution begin to scale the model through technology, integrated data flows, structured dashboards, analytics, and continuous improvement loops. The model becomes more visible, more efficient, and more adaptive. The goal at this stage is performance.

Not every institution moves through these stages at the same speed. Not every relationship category needs the same level of sophistication. Some may remain relatively light. Others may become highly structured. But the sequence matters.

Visibility before control. Control before optimization. Architecture before automation.

This sequence is the practical discipline that prevents PRM from becoming either too abstract or too technologically driven too early. It also helps leadership understand that maturity is not measured by how much software has been implemented, but by how clearly the institution has moved from fragmented handling to governed capability. A maturity roadmap is valuable because it gives PRM an implementation logic without implying that all institutions should look identical. It creates direction without forcing false uniformity. That is exactly what a good operating model should do.

Visibility must come before control, and control must come before optimization.

OHK has observed that institutions often struggle with PRM maturity not because they are moving too slowly, but because they try to operate at later stages before earlier ones have been secured. The symptoms are usually easy to recognize: (i) optimization is discussed before visibility has been established, (ii) technology investment is prioritized before governance and role clarity exist, (iii) institutions try to measure performance before defining categories and expectations, (iv) dashboards are built before the organization has agreed what progression should look like, and (v) leadership expects mature outcomes from a model that is still at an early stage of institutional coherence. These mistakes are common because maturity can look like a technology problem when it is actually a sequencing problem. Over time, they reveal that the institution is trying to skip steps in the architecture of capability. That is why the sequence matters so much: visibility before control, control before optimization, and architecture before automation.

What Part II Has Really Described: The Design and Institutionalization of PRM

UK Power Networks, London, United Kingdom: UK Power Networks is a useful closing example because it shows how engagement, outcomes, operational reporting, and strategic priorities can be connected inside one institutional narrative. Its annual review presents stakeholder engagement not as a standalone communications activity, but as part of how the company delivers outcomes, responds to customer and community needs, and improves action on issues such as decarbonisation and supply-chain emissions. That makes it a good final example for Part II because it reflects the broader argument of the article: PRM is not one isolated workstream. It becomes meaningful when discovery, design, governance, performance visibility, and operational follow-through are all treated as parts of one coherent institutional capability.

By this stage, it is useful to make the through-line explicit. The ecosystem discovery, mapping, segmentation, lifecycle design, governance structures, KPI logic, technology positioning, implementation planning, and operationalization described in this article are not separate management exercises. Together, they form the design and institutionalization of PRM.

This matters because PRM is often misunderstood as either a software category or a narrow engagement function. It is neither. Properly understood, it is the integrated operating model through which an institution manages partner relationships across the full lifecycle. Technology supports it, but does not define it. Communications contribute to it, but do not exhaust it. Procurement may intersect with it, but does not contain it. PRM sits above these elements as the institutional logic that brings them together. That is why Part II matters. It is the bridge between principle and system.

Part I argued that utilities need a more structured way to manage complex external ecosystems. Part II has shown what that structure looks like when translated into design choices, governance mechanisms, workflows, review routines, and performance disciplines. Once that is understood, the question is no longer whether PRM needs to be built. The question becomes how PRM should be enabled within the wider enterprise environment. That is the purpose of Part III.

Part III will examine what should sit in PRM, what should remain in ERP, CRM, SRM, workflow, and analytics layers, how these systems should connect, and how institutions can avoid unnecessary dependency while preserving long-term control. If Part I made the strategic case for PRM, and Part II showed how it should be built, then Part III will ask how it should be digitally enabled as part of the enterprise stack.

OHK has observed that one of the most common sources of confusion in PRM work is that institutions treat discovery, governance, performance, implementation, and technology as if they were separate improvement exercises rather than parts of one integrated capability. The warning signs usually appear when (i) different workstreams advance without a shared design logic, (ii) PRM is spoken about as either a platform or an engagement function rather than an operating model, (iii) governance and process design are handled independently of lifecycle and segmentation, (iv) implementation is treated as rollout rather than institutionalization, and (v) technology begins to define the language of the effort before the institution has defined its own. These patterns create fragmentation even within transformation itself. Over time, they reveal the deeper truth that Part II has been building toward: PRM only becomes real when discovery, design, governance, performance, enablement, and adoption are understood as one institutional architecture. That is the point at which the institution stops discussing PRM as an idea and starts building it as a disciplined capability.

PRM is not a collection of activities, meetings, or systems; it is the institutional architecture through which relationships are turned into coordinated value, governed performance, and durable execution.

That is where the trilogy now moves next.

If your organization is rethinking utility transformation, infrastructure delivery, or long-term resilience, contact us to explore how OHK can help design and implement durable, institutionally workable solutions.